Findmypast (FMP) software engineering practises and operational practices have changed considerably over the last few years. We’ve moved from a “classic” monolithic web service, where deployments to production were applied possibly weeks after the end of a sprint, to a microservices approach where we have continuous deployment to production and the majority of the FMP service is managed by Kubernetes.

The first blog post detailed the start of the journey, where we have started to pick apart our monolith code base and start to use microservices to deliver the FMP service. This second blog post continues the journey…

The Dark Days of Winter 2017

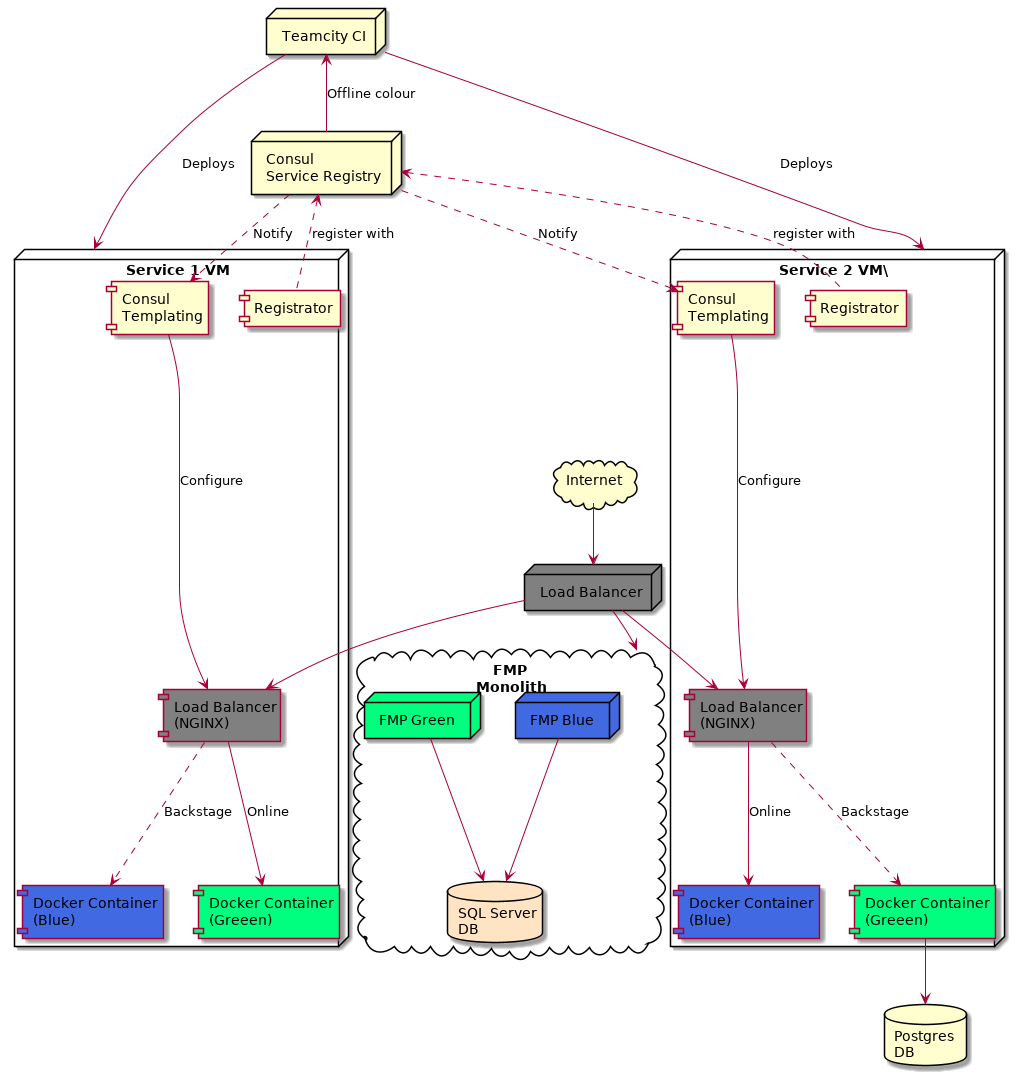

At the end of the last blog post, we had an infrastructure that used Consul as a service registry and NGINX acting as a reverse proxy into our docker containers. For a while, everything was fine, but as more microservices came online we started to see more and more failures with deployments. These failures were generally due to infrastructure issues.

These were dark days, with the team spending a lot of time fire-fighting issues with the deployments. We had a whole host of symptoms, including:

- NGINX routing to wrong containers, or not routing at all;

- Smoke tests on deployed containers failing, meaning the active live colour wouldn’t be flipped;

- The active colour being flipped but not reflected in the NGINX configuration.

The root of all evil the problems was consul templating. It (apparently) couldn’t cope with the amount of changes within Consul itself. It either crashed out when generating the NGINX template (which it did frequently) or simply got slower and slower. Monitoring of consul template showed a large amount of messages being received. The end result was that NGINX configuration was invalid, which then caused the routing issues. NGINX as a central routing point was a single point of failure. In those dark days, most of the teams’ time was spent investigating consul template (and restarting consul template).

We had to find a quick solution and a number of options were discussed:

- Docker Swarm. Once again we looked at - and rejected - Docker Swarm to manage the containers. We were still concerned about docker memory issues taking out a whole node;

- A NodeJS and Elixir service discovery client was discussed. The idea here was that services, rather than relying on DNS to find a service, would use the service discovery client to determine the name (and port) of the service to talk to. This idea was rejected, partly because it would involve a lot of retro-fitting to existing microservices, but mainly because we didn’t feel it was the responsibility of the microservice to “discover” how to talk to another service. Essentially, it should just use an URI and let the infrastructure sort it out;

- To counter the above argument, a side-car model was discussed where we would inject a service discovery side-car into either the docker container or the host VM. The side-car would intercept network requests and route then accordingly. This also was rejected, it was complicated and no-one in the team was a networking expert.

The solution the finally settled on wasn’t universally loved, but it had the benefit of being a quick win, easy (in relation to the other options) to implement and had minimal impact on existing microservices. Here’s what we ended up with:

The solution isn’t a million miles away from the earlier infrastructure. The main change is that rather than having NGINX/Consul templating as a single point of failure, we’ve shifted the load balancer (running as a docker container) down into each service VM. Consul templating need only update the configuration for that specific service, so the load on consul templating is spread across each service. In this architecture, consul templating was much more stable.

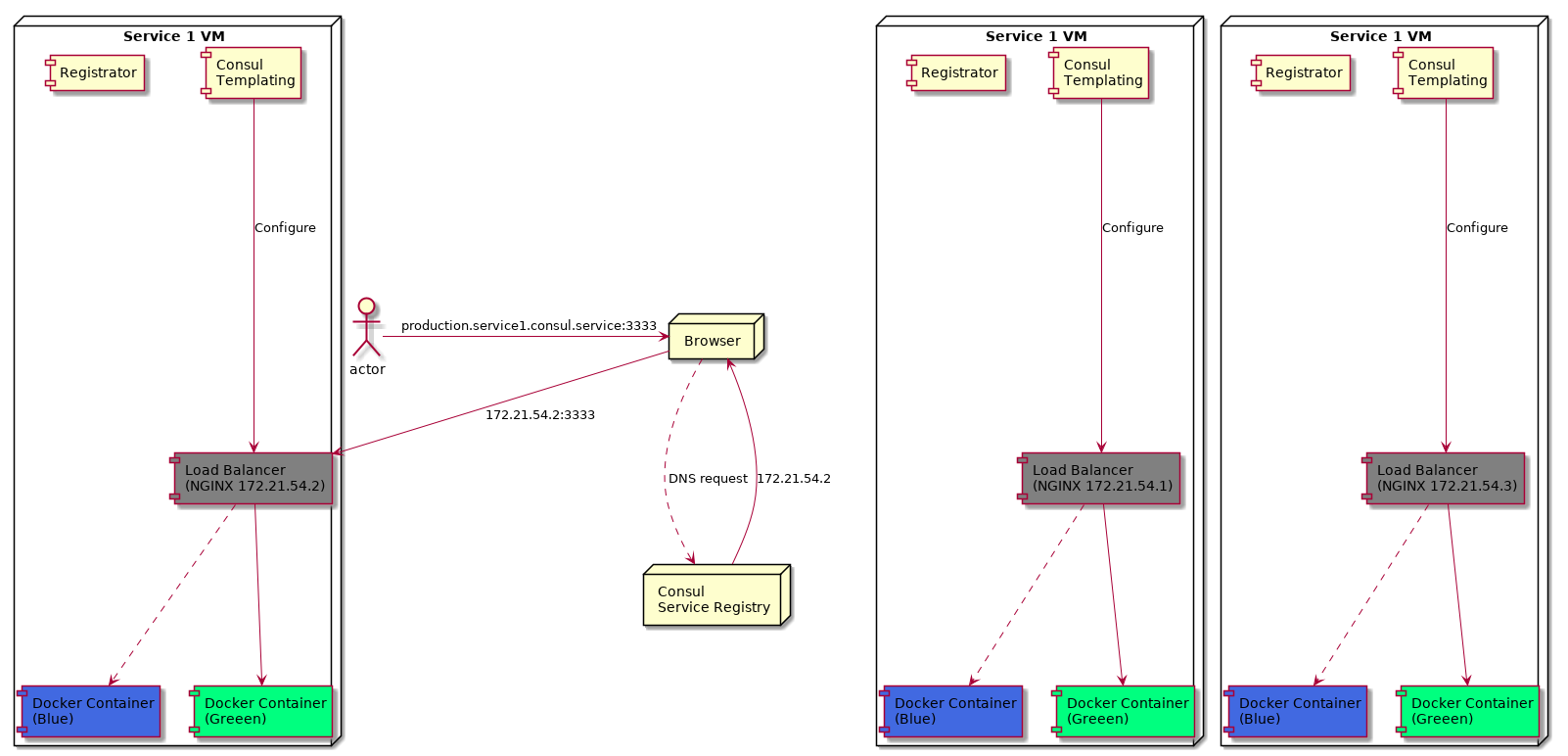

A major change - which isn’t obvious from the diagram - concerns service to service routing. How does a service call another service and route to the correct load balancer (which are now hosted on a range of VMs)? The approach here was to use Consul Service Registry as a DNS server. Recall that Consul will hold all information about all the docker containers registered on the VMs, including the new NGINX load balancer containers. A URL such as production.service1.consul.service would resolve using Consul (via round robin) to one of the VMs running the service service1. The downside was that we needed to expose those load balancers on a specific port on the VM - we choose that to be port 3333. Services could interact with our services using a a url in the format {environment}.{service-name}.consul.service:3333. The hard coded port, in particular, really upset a few people in the team. It’s not service discovery, they would cry. However, it was a necessary evil in order to get the solution in place with very little pain.

The diagram also doesn’t make it clear the redundancy built into moving the load balancer down into the VM. Each service had at least 3 VMs associated with it:

A service URL was resolved using the Consul as the DNS service. In the above example, we have three VMs, with a load balancer exposed on port 3333 and IPs of 172.21.54.1, 172.21.54.2 and 172.21.54.3. Consul DNS will round robin through those (healthy) IPs. Should one of the nodes fail, or the load balancer health checks fail, then the IP is removed from the healthy upstream list and Consul won’t return that IP back from the DNS request.

From a microservice point of view, the changes required to make this happen were minimal:

- Update any service URLs in the source code to use the new

consul.service:3333addresses; - Deploy a load balancer as part of the deployment process.

The latter was made easier by the automated tools we had already developed in house to scaffold up services and automate the CI build pipeline.

Towards the end of 2017, all microservices were updated with those changes, everything settled down and deployments were no longer failing. :grin:

With the deployment stabilised, we could turn our attention elsewhere. Hmmmm….what’s that shining over there in the distance? Could it be the fabled container orchestration tools Kubernetes? Why Sir! I do believe it is! Part 3 of the journey is about to begin!