Findmypast (FMP) software engineering practises and operational practices have changed considerably over the last few years. We’ve moved from a “classic” monolithic web service, where deployments to production were applied possibly weeks after the end of a sprint, to a microservices approach where we have continuous deployment to production and the majority of the FMP service is managed by Kubernetes.

We didn’t just jump straight into Kubernetes, the journey along that path was long and winding. These set of posts document the journey through that long and winding road.

The Monolith

Back in days of yore - 2015 - The Findmypast web service was a C# ASP.Net MVC web application with a SQL server backend. Pretty standard stuff back then. The code base was split into the main FMP website along with a number of common library assemblies.

Development process was sprint based. We branched off the master, coded for a couple of weeks and, if the feature was ready, we would push the changes to our integration environment.

Our QA team would test the feature changes on integration and, if all went well, changes in the branch would be merged into master and then deployed into production.

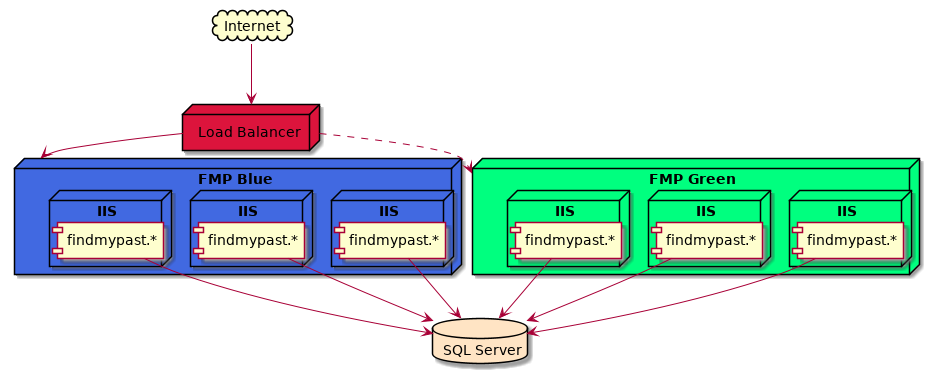

We used blue green deployment to deploy changes to production. 20 Windows servers running IIS were allocated to the green bank, while another 20 were allocated to the blue bank.

In the example above, the blue bank is serving web site traffic while the green bank is offline. Deployment involves updating the green bank with the latest changes, running automated tests against that bank before configuring the load balancer to switch colours and direct traffic to the green bank. In case of disaster, rollback of the site was as easy as switching back to the offline colour.

Scaling the site involved deploying the same code to both banks and configuring the load balancer to use all hosts in both banks. (This we call cyan deployment and was used when we expected a lot of traffic to be hitting the site.)

So, why did we change?

We had a number of challenges that we wanted to overcome with the above approach:

- We couldn’t scale the service effectively. Sometimes the servers underutilized. At other times, we need to bring in the whole bank of 40 servers to service the load. We couldn’t automate scaling of the service depending on load.

- Our release velocity was slow. Features could take weeks to be deployed to production. Defects found with the feature after it was pushed to production were investigated by the engineering team responsible for the feature. However, those feature changes would have been made weeks earlier - it’s hard for engineers to context switch from their current task to debug code written weeks ago.

- The distance between the code being committed and finally deployed into production meant that it was sometimes hard to determine what code change was the root cause of a problem. That was compounded with the fact that a deployment release would have involved multiple branches being merged into trunk. Identifying which branch/feature caused a problem wasn’t always straightforward.

- And, of course, different teams working on different branches can cause merge hell if teams are working close to the same codebase.

We wanted to deploy to production faster as well as spot and fix problems earlier. Also, we wanted “developer joy” - no painful merge conflicts, deployments that passed automated tests and went straight into production.

So, we started the process of migrating from the monolith to a microservice architecture. If you’re interested in our process of how we approached breaking apart the monolith, check out Extracting a Microservice from a Monolith by Neil Crawford.

The microservice architecture

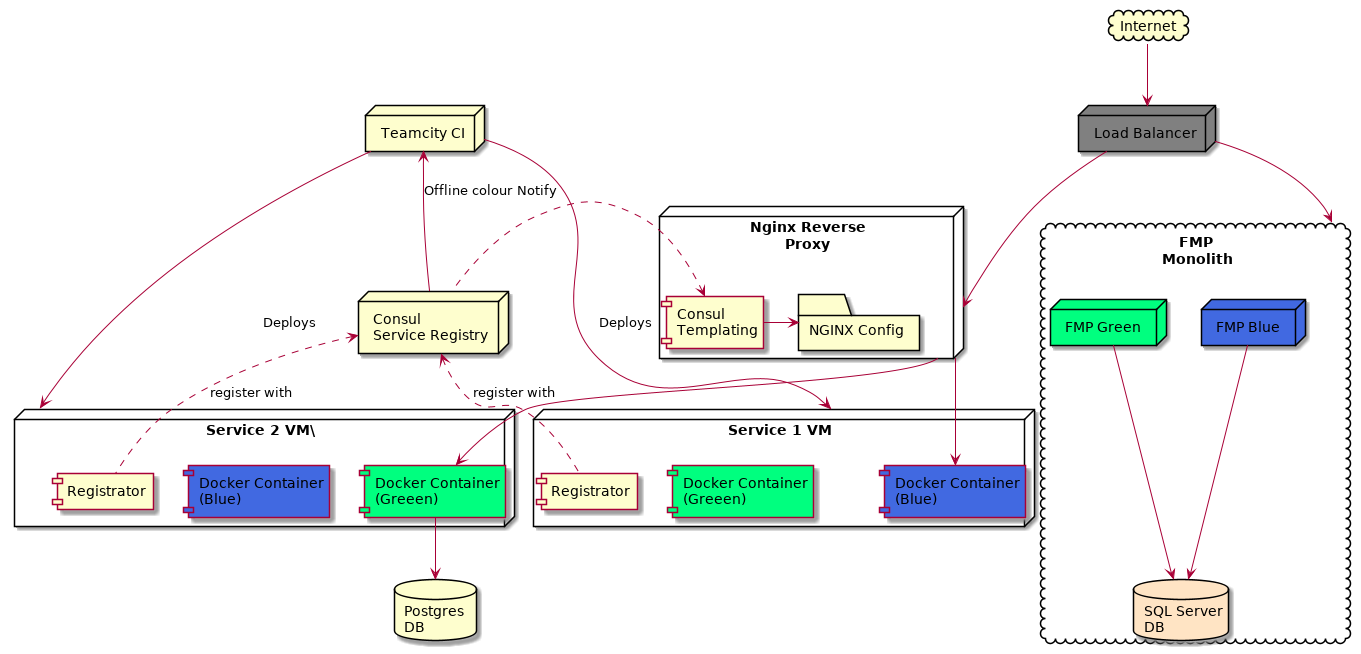

Our initial architecture looked a little like this:

(You may also want to watch the Deployment and Infrastructure at Findmypast given by Sash from Findmypast which also discusses this infrastructure.)

That’s a busy wee diagram, so let’s break it up a little.

Service Virtual Machines (VMs)

Each microservice had a number of Linux virtual machines, each of which had docker engine installed. Each service node typically had four docker containers running:

- A blue service container

- A green service container

- A registrator container

- CAdvisor container to provide container instrumentation. (Not important to this discussion and not on the diagram!)

In our first incarnation of the infrastructure, we continued to use blue-green deployment. Service 1 VM, in the diagram above, has the blue container as the live/online colour while Service 2 VM has green as the online colour. Briefly - we’ll cover this in more depth later - we would easily swap between the two colours by routing to a different container in the VM.

The registrator container was responsible for monitoring the docker containers running on the VM and notifying the service registry (Consul) when containers were added or removed. Registrator would register the docker container IP address, external port and other metadata - such as the colour of the container.

Continuous integration

Our CI pipeline (Teamcity) was responsible for building a tagged docker image for a service, publishing that image to our internal docker repository and deploying the image to the service VM.

Service Registry and Routing

The heart of the new infrastructure is the Consul service registry along with the NGINX reverse proxy. Consul is a registry that holds:

- The service name, specifically which docker containers are associated with a service;

- The IP address of those containers;

- The exposed port of those containers;

- Metadata about the container, such as the colour.

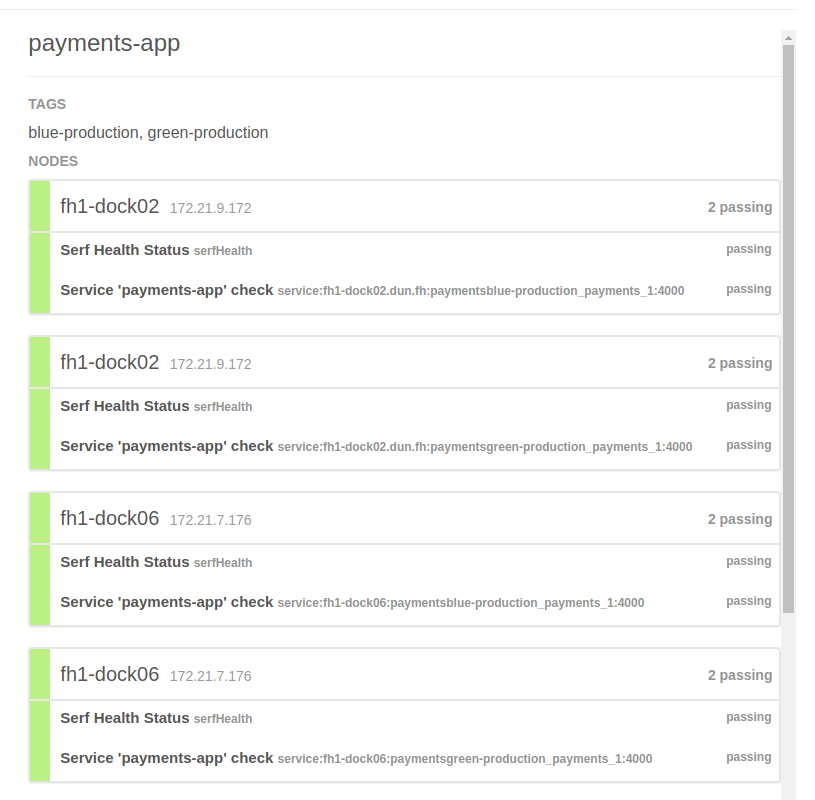

Here’s an example of a payments app service within Consul:

This service is tagged as a production service, along with the blue/green colour. It lists the nodes that the service related docker containers are hosted on. Consul also supports health checks on the node (VM) level as well as the service level. If a node becomes unhealthy, then that node will be marked as unavailable. Similarly, if a docker container becomes unheathly then that specific container on the node is marked as unavailable.

So, Consul knows about a service and which healthy docker containers are associated with the service. But, Consul is a registry, it holds this information but it doesn’t provide routing. (Actually, it is a DNS server as well, but DNS looks up IP addresses, but we also need the docker container port information which DNS doesn’t provide.) How do we use the information in the registry to route requests to the correct docker container?

To achieve that we use NGINX. The NGINX configuration file is generated on the fly by consul templating. Changes in the Consul service registry are picked up the consul templating application running on the NGINX server. It then regenerates the NGINX configuration from a template file that reads the registry and updates the NGINX routing accordingly.

Blue-Green Deployment

Consul also holds a Key-Value store. We used the KV store to save information about the state of a service - including which colour is currently online. When we want to switch which colour is serving live traffic, we update the relevant key in the KV store. That change is picked up by consul templating, which re-writes the NGINX configuration to route to the new colour. The CI pipeline is responsible for the deployment:

- The CI gets the current offline colour from Consul, builds, tags and deploys a docker image to the service VMs, overriding the existing offline docker container with the new container;

- Automated smoke and acceptance tests are run against the new offline container;

- If all tests pass, Teamcity updates the colour key in the Consul KV store, forcing consul template to update the NGINX config. The flip of a colour takes a few milliseconds while NGINX reloads its configuration.

Scaling Deployments

Scaling the new services was not ideal. Each service had a dedicated number of VMs associated with it and each of these VMs only held the blue/green containers for that service. Scaling the infrastructure meant provisioning new VMs and then deploying the service containers onto the new VM.

Why did we go down the road of having only one set of service blue/green containers on a VM? We could have added many more service containers into the VM and registrator would have easily picked up on the container tags and registered with Consul. For that matter, why did we choose one service per VM, rather than using something like Docker Swarm?

The reason was stability; At the time, we found that docker swarm wasn’t production ready. For example, containers that crashed out of their memory limits would sometimes bring down the entire node, wiping out every container on the node. With enough VMs in the cluster this may not have been a big issue, but other issues with docker swarm forced us to keep it simple. It wasn’t elegant, but it worked.

Conclusion

FMP started off with a typical ASP.Net MVC stack and started the progress of breaking up the monolithic codebase into microservices. Our development and deployment processes changed, we started to use Trunk Based Development and Repository Driven Development. We updated our CI pipeline to use shared deployment code, so all microservices were deployed in the same way. This enabled us to quickly roll out changes to the deployment pipeline to all services. All of these new approaches helped us to deliver more quickly into production. We committed small code changes and often, all changes pushed to trunk were deployed to production (after automated tests gave us the green light to go to production). Continuous deployment was becoming the norm within FMP. Code that wasn’t production ready was hidden behind feature toggles.

So, we were moving faster with regards to our development process, but the infrastructure still wasn’t strong enough. The next blog post tells the story of the dark age and the continuous struggle we had with the deployment infrastructure.